We want the internet to be safer. For our kids. For ourselves. We want to communicate, find information, collaborate, create, share, engage, participate and have fun. We want to seek out what we need — including the full range of adult content that adults have always sought — in ways that are appropriate to who we are and where we are in our lives. We want age-appropriate access that doesn’t require us to hand over our passports to every platform we visit. We want the architectural conditions of digital life to be designed for human flourishing rather than engineered for compulsive use. And we don’t want the solution to these problems to be a surveillance infrastructure that violates the privacy rights it claims to protect.

These are not unreasonable things to want. They are, in fact, the things that good digital regulation should deliver.

But there is something more specific underneath all of this. We want digital environments that lean toward a caring orientation. Spaces where the default assumption is that users are people with complex needs, relationships, vulnerabilities and capacities — not attention units to be harvested. Where the architecture of the platform supports human connection rather than exploiting it. Where the experience of being online doesn’t require constant vigilance against the system that is supposed to be serving you.

That is the design brief. And almost nothing about the regulatory choices being made right now — in Australia, in Europe, in the UK, and across the globe — is actually building toward it.

The Market Logic Nobody Wants to Name

Before getting to the policy failures, it is worth being precise about why they keep happening. The answer lies in market logic that is so entrenched, so global, and so structurally opposed to a caring orientation that no single national regulatory instrument can adequately address it.

The incumbent platforms — Meta, TikTok, Google, Snap — are not primarily communication services that have some problematic features. They are attention extraction machines that have communication as a byproduct. The product is engagement. The inventory is human time and psychological state. The business model optimises for the time users spend in states of arousal, comparison, compulsive return, and social anxiety — because those states generate the engagement signals that drive advertising revenue.

Every design feature that has been identified as harmful — infinite scroll, algorithmic recommendation, social feedback loops, disappearing content, notification systems, engagement-maximising AI — is not incidental to how these platforms make money. It is how they make money. The harm is the business model. The architecture that exploits developing brains is the same architecture that generates billions in revenue. Internal corporate communications — made visible through litigation processes rather than through corporate transparency — show that companies knew this and chose not to adequately address it. This is evidence of deliberate design intent, not corporate negligence.

Regulation that doesn’t change this underlying market logic doesn’t address the problem. An age ban doesn’t change the market logic — it removes a demographic without reforming the architecture that exploits them. Age verification doesn’t change the market logic — it adds a compliance cost that large platforms absorb and small competitors cannot. Even design obligations only change the market logic if the penalties make harmful features more expensive than the revenue they generate. For Meta, whose annual global revenue exceeded USD200 billion in 2025, a flat AUD49.5 million fine — Australia’s maximum penalty — is a rounding error. It does not change the calculation.

This is why the financial structure of regulation is not a technical detail. It is the mechanism by which regulation actually changes what the market produces. Penalties proportionate to global turnover — 5% to 10% — make the cost of harmful architecture real in a way that flat caps never can. Design obligations without proportionate penalties are aspirations. Design obligations with proportionate penalties are market signals.

The global reach of these platforms makes this harder still. TikTok’s recommendation algorithm is trained on engagement data from over a billion users across every jurisdiction. Meta’s systems don’t differentiate by country. A platform regulated to remove infinite scroll in Germany still has infinite scroll optimised on data from 3 billion users elsewhere. A national design obligation is a local intervention in a global architecture. This is why harmonisation matters — not just for legal coherence, but for actual effectiveness. The European Digital Services Act‘s harmonised framework, with Commission-level enforcement against Very Large Online Platforms, is structurally more capable of changing the market logic than any national ban. But only if it is designed with the financial penalties and design obligations that make compliance cheaper than non-compliance, and only if it is consistently enforced.

The attention extraction economy also produces a specific kind of competitive moat. The more data a platform has, the better its recommendation system. The better its recommendation system, the more engaging the platform. The more engaging the platform, the more users it attracts. The more users it attracts, the more data it has. This is a self-reinforcing loop that incumbents have been running for fifteen years. Regulation that adds compliance costs without breaking that loop entrenches incumbents rather than challenging them — because large platforms can absorb the compliance cost while smaller competitors cannot build the alternative at scale.

What the Australian Social Media Age Ban Has Taught Us

Australia’s Social Media Minimum Age Act came into force on 10 December 2025, banning children under 16 from holding accounts on designated social media platforms. It was the world’s first such ban. It passed in the last sitting week of 2024, introduced and passed within eight days, with a 24-hour public submission period that received 15,000 submissions, of which only 107 were published. This expedited process occurred shortly before a federal election that was called four months later in March 2025. FOI correspondence reported by Crikey and analysed by researcher Amanda Third showed the national Social Media Summit was designed to “build momentum for a decision already made,” not to deliberate on evidence. The political momentum was performative — the instrument was chosen for its communicative power rather than its causal effectiveness.

Six months in, the picture is clear.

The ban is not working on its own terms. The Molly Rose Foundation’s survey of 1,050 Australian 12-15 year-olds found 61% of those who previously had accounts on restricted platforms still have access to at least one active account. Among those still accessing banned platforms, 60-64% said the platform had taken no action to remove their account. The dominant story is not children cleverly circumventing the ban. It is platforms failing to comply.

The harm measures haven’t moved. The eSafety Commissioner’s own compliance report found no measurable drop in cyberbullying or image-based abuse complaints from children under 16 in the first three months of enforcement. These are the direct harm measures the ban was designed to move. They haven’t moved. Because the harm is in the architecture. And the architecture hasn’t changed.

Children were not consulted. The policy was designed by adults, about children, driven by adult anxieties, in a process that made meaningful child participation structurally impossible. A FOSI survey conducted in December 2025 found 65% of Australian parents support the ban — but only 38% of Australian children did. 56% of children said they feared losing important connections and support. The recent EU Kids Online network’s survey of 29,169 children across 19 European countries found 45% disagree that an age ban would make them safer online. Children knew this wouldn’t work. Nobody adequately asked them. They just became media soundbites.

Vulnerable children have been made less safe. Teenagers who bypassed the ban by appearing as adults lost the safety features platforms built specifically for teen accounts. The children most likely to circumvent the ban — the most determined, often the most vulnerable — have been stripped of the protections designed for them.

The ban was built on the wrong argument. It was passed on a mental health narrative — the claim that social media is the primary driver of the youth mental health crisis. That causal claim was contested in the peer-reviewed literature at the time of enactment and remains contested. The government has since quietly shifted the rationale — writing recommender algorithms and endless-feed features into the legal definition of a harmful platform — without acknowledging it. The shift is correct: the harm is in the design architecture. But arriving at the right argument after passing the wrong instrument doesn’t fix the instrument.

The Social Adoption Curve and the Workaround Economy

Regulation that ignores how people actually behave in response to restrictions will consistently produce outcomes it didn’t intend. The social adoption curve — how technologies spread through populations, become embedded in social norms, and resist displacement — is not a peripheral consideration for digital regulation. It is central to whether regulation achieves anything.

The NBER working paper surveying 835 Australian teenagers four months after the ban found that only about one in four 14-15 year-olds comply. Most banned teens believe their peers are still using platforms and cite social reasons for continuing. Teenagers reported they would need roughly two-thirds of their peers to stop using social media before they themselves would stop — far above the share currently complying. The more influential teenagers disproportionately stay on the platforms. The ban hasn’t shifted the social norm, and without that shift, legal prohibition alone cannot move behaviour.

This is not a failure of enforcement. It is a failure to understand how social technologies become embedded in the texture of everyday life. Social media is not a product that teenagers chose from a range of alternatives. For many, it is the primary infrastructure of peer connection, social identity, cultural participation, and information access. Removing it without providing alternatives — without investing in digital literacy, without creating safer spaces, without engaging with the social dynamics that make these platforms so central — is like removing a road and expecting people not to find another route.

The workarounds don’t just circumvent the regulation. They route around the safety infrastructure too. When teenagers bypass the ban they don’t find a safer internet. They find Discord servers, Reddit threads, private WhatsApp groups, and gaming platforms — all less moderated, less visible to adults, and more opaque to regulatory oversight. The Molly Rose Foundation data shows 43% of children are using gaming platforms more and 39% are using messaging apps more since the ban. These spaces are not covered by the ban, have weaker safety systems, and are harder for researchers, regulators, and parents to monitor. The unintended consequence of the ban has been to push children’s online activity into less regulated environments while maintaining the fiction that they are protected.

Social norm change does happen — and when it does, it can be powerful. But the evidence from decades of public health research suggests that norm change is produced by education, social modelling, environmental design, and cultural shift — not by prohibition that lacks meaningful enforcement and ignores the social dynamics that make the prohibited behaviour attractive. The ban cannot shift the norm because it doesn’t address why the platforms are so central to teenagers’ social lives in the first place. That is a design problem. And design is what the ban doesn’t touch.

The Age Verification Architecture: Surveillance by Another Name

The ban’s enforcement depends on platforms verifying users’ ages. Australia’s law requires “reasonable steps” without specifying what those steps must be, and mandates that verification data be deleted once its purpose is served.

In practice, platforms deployed a patchwork of unreliable methods. Facial recognition proved wildly inaccurate near the 16-year threshold. The government’s own age assurance technology trial found that no single solution suits all use cases — and that some vendors were proactively retaining biometric and identity data beyond legal requirements, anticipating future law enforcement or regulatory requests that didn’t yet exist. This is surveillance creep in documented, real-world form. The legislation required deletion. Vendors were building retention infrastructure instead.

The attack surface problem is structural. Every mandatory age verification requirement creates a chain of custody for sensitive identity information. Every link in that chain is vulnerable. The Discord breach of September 2025 — in which government identity documents submitted for age verification were accessed through a compromised third-party provider — illustrated exactly what mandatory verification creates. Third-party age assurance providers don’t just become attack vectors. They become commercially entrenched ones, with incentives to retain rather than delete the data they process.

There is also a fundamental confusion in the verification approach between identification and safety. Safety is a property of environments. Identification is a property of users. Making an environment safe does not require knowing who is in it. Article 28(3) of the EU’s Digital Services Act makes this explicit: compliance with child safety obligations “shall not oblige providers of online platforms to process additional personal data in order to assess whether the recipient of the service is a minor.” Europe’s primary platform safety instrument explicitly says you do not need identity verification infrastructure to protect children. The design obligation can be met through architecture, not identification.

The identification-surveillance-rights tension cannot be resolved within the verification framework. It can only be dissolved by the design framework, which doesn’t require it. If platforms are required to make their services safe by design for everyone, the question of who users are becomes largely irrelevant to the regulatory obligation.

The Kitchen Sink Problem: Two Instruments in Operation, One Horse Being Backed

Australia has two regulatory instruments already in operation that are pulling in opposite directions — and a third that the government is now hastily backing as the evidence mounts that the first two are seemingly in conflict with their desired outcomes, but rapidly servicing an economic boon in age assurance technologies.

The age ban says under-16s should not be on restricted platforms — access control through exclusion. It is being enforced now, with formal investigations underway against five major platforms.

The Phase 2 industry codes extend age assurance obligations across the commercial internet infrastructure that most Australians use daily — social media, messaging, gaming, search engines, hosting platforms, app stores, and operating systems. Surveillance architecture through identity verification at every layer of digital life. Already being implemented. Commercial infrastructure being built around it now.

These two instruments share a theory of change: identify users → gate by age → safety through exclusion and verification. They are the horses that won the race to be saddled first.

The Digital Duty of Care is the horse now being backed after the race has started. Released as an issues paper for consultation in May 2026 — eighteen months after the ban passed — it proposes that platforms must maintain safe environments through effective systems and processes, covering the entire tech stack including generative AI capabilities. It has a fundamentally different theory of change: design safe environments → safety through architecture.

It is not legislation. It is not law. It is a consultation document that may or may not become legislation, that if it becomes legislation will commence no earlier than 2028, into a regulatory environment where the surveillance architecture will have had three or four years of commercial entrenchment. Whether it actually passes is uncertain. Whether it retains its ambition through consultation, drafting, parliamentary debate, and an election cycle is more uncertain still. The government that releases issues papers is not the same thing as a government that passes legislation — as Australia’s stalled gambling reform, its undelivered media bargaining code amendments, and a dozen other promised instruments demonstrate.

What is certain is that the Safety-by-Design angle of the Duty of Care cannot be coherent alongside the instruments that arrived before it. The ban removed under-16s as a regulatory lever — platforms no longer have a commercial relationship with that demographic, so design obligations for that age group have no market teeth. The industry codes built identity verification infrastructure across the entire internet stack before the design obligation existed to challenge it. By the time the Duty of Care arrives — if it arrives — the surveillance architecture will be the established compliance baseline and the design obligation will accommodate itself to that baseline rather than replacing it.

The first two instruments share a theory of change that is incompatible with the third. No amount of drafting ingenuity can resolve that incompatibility because it is not a drafting problem. It is a sequencing problem. And sequencing problems cannot be fixed retroactively.

This is what happens when policy is made reactively, under political pressure, without a coherent theory of change. The ban for electoral momentum. The industry codes for the enforcement gap the ban couldn’t address. The Duty of Care for the evidence gap the ban made visible — a gap that the evidence predicted before the ban passed and that the compliance data has since confirmed. Each instrument designed in response to a different political moment, without knowledge of the others, building infrastructure that points in opposite directions.

The kitchen sink approach feels comprehensive. It is, in fact, incoherent — and nobody in the political process is stepping back to ask what theory of change actually connects any of this to children being safer.

The Senate committee that passed the ban knew it was insufficient. In the same report, it recommended a Digital Duty of Care, meaningful engagement with young people, and an independent review within 18 months. Eighteen months later, the Duty of Care is still only an issues paper, children were not meaningfully consulted, and the compliance data has confirmed what the committee already knew: the ban alone was not enough.

First Mover Entrenchment: Why the Wrong Instrument Wins

The sequencing problem is worse than a policy mistake. It is a policy mistake that forecloses correction.

Regulatory infrastructure creates commercial ecosystems. Commercial ecosystems create incumbents. Incumbents invest in maintaining their position. Regulators incorporate incumbent frameworks into compliance standards. Compliance standards become the definition of reasonable steps. The alternative has to fight the established definition rather than starting from first principles.

The age assurance industry had a structural commercial interest in the Australian ban passing. Without mandatory verification requirements their market is voluntary and limited. With mandatory requirements — extended through Phase 2 industry codes across the entire internet stack — they have a legislatively mandated, expanding global market. The cascade of age ban legislation following Australia is, from their perspective, a commercial opportunity of extraordinary scale. Every new jurisdiction that follows Australia is a new market.

The trial dynamic illustrates the problem precisely. The Australian age assurance technology trial was run by the Age Check Certification Scheme — a UK-based company that specialises in certifying identity verification systems. The 53 vendors who participated were hoping to win contracts. Yoti — one of those vendors — was simultaneously already operating as Meta’s age verification provider for Instagram and Facebook in Australia. The trial was partly evaluating a vendor that was already commercially embedded in the platform being regulated.

Meta’s participation in the trial was not a technology submission — it was a policy position paper arguing that Apple and Google should bear the age verification infrastructure burden at the operating system level. A platform being regulated used a technology evaluation process to argue someone else should build the infrastructure.

By the time the Digital Duty of Care might commence — 2028 at the absolute earliest — the age assurance industry will have had three or four years of commercial entrenchment. The ACCS accreditation framework will be established. Trusted provider lists will be published. Yoti, k-ID, and whoever else made the cut will have multi-year contracts with major platforms. The regulatory definition of “reasonable steps” will have been shaped by the infrastructure that already exists — which is surveillance-based, not design-based.

The Duty of Care arriving into that environment does not displace the surveillance architecture. It layers design obligations on top of it. Platforms satisfy their risk assessments by pointing to their age assurance compliance. Design-based safety becomes an aspiration accommodated within the surveillance infrastructure it was supposed to replace.

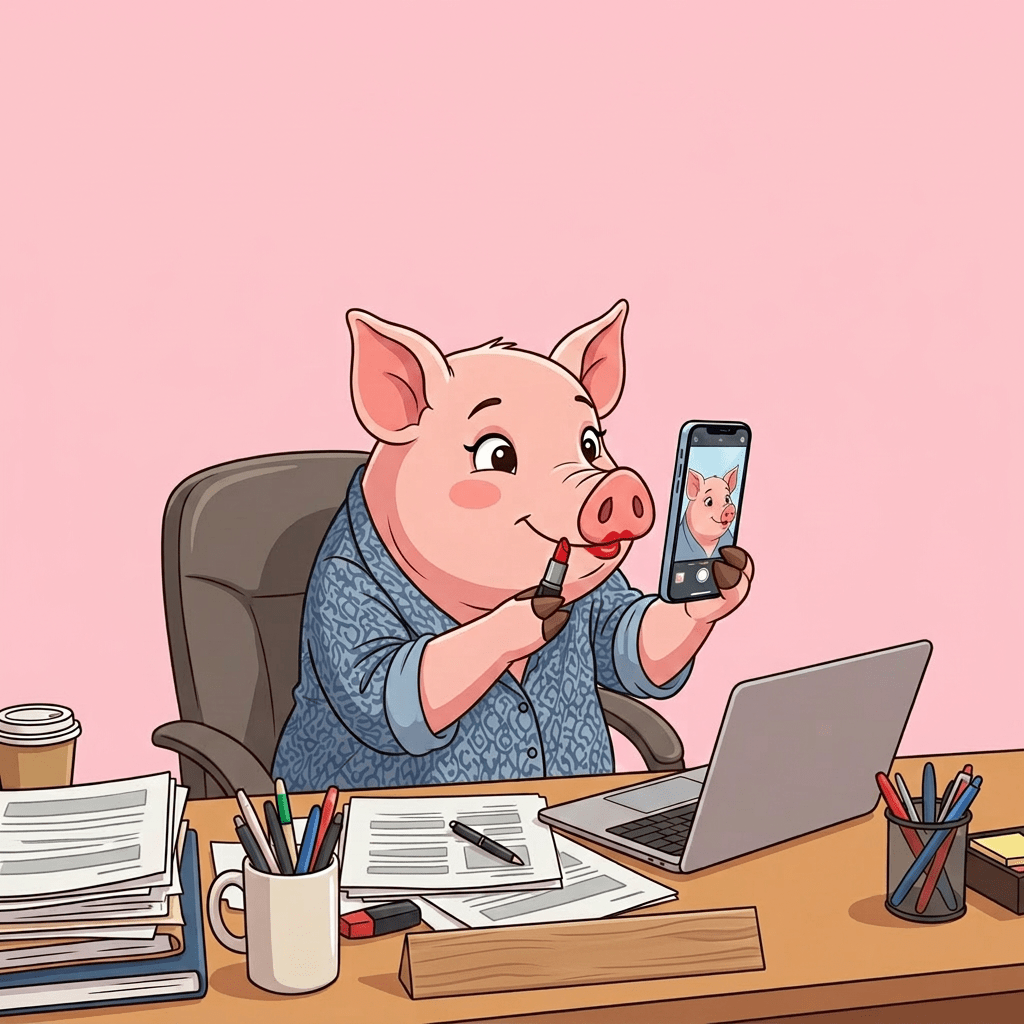

This is the lipstick. The pig is already there.

The Market Foreclosure Nobody Is Talking About

Building expensive surveillance infrastructure as the baseline compliance requirement for operating digital services locks out the competitive innovation ecosystem that could produce the alternatives we actually need.

Age verification at scale requires technical capability, regulatory accreditation, legal compliance across jurisdictions, and ongoing operational infrastructure. These requirements favour large, well-resourced incumbents who can absorb compliance costs. They disadvantage smaller players who might otherwise develop genuinely safer localised alternatives — platforms designed from first principles around user wellbeing rather than engagement maximisation, community-governed spaces, federated architectures, open-source tools, cooperative models.

A small company building a genuinely caring social platform for young people cannot afford the age verification infrastructure required to operate legally under the industry codes. The incumbent platforms — Meta, TikTok, Google — can. The regulatory requirement that was supposed to hold them accountable instead reinforces their monopoly position. This is not an incidental side effect. It is a predictable consequence of designing compliance infrastructure around the capabilities of the largest players.

The attention extraction economy already has a massive first-mover advantage built on fifteen years of engagement data, network effects, and platform lock-in. Surveillance-based compliance requirements compound that advantage. They create regulatory moats around incumbents that make it structurally harder for new entrants to compete — even new entrants with better, safer, more caring designs.

This matters because market competition, properly structured, is a more powerful mechanism for improving platform safety than any single regulatory instrument. If a platform with a genuinely caring orientation — one that doesn’t exploit users, builds in natural stopping points, recommends content for user want rather than engagement maximisation — can compete effectively with Meta and TikTok, the incumbents face pressure to match it. If the regulatory architecture makes it impossible for that platform to exist, the pressure disappears and the incumbents have no incentive to change.

The caring orientation we want from digital environments is more likely to emerge from a diverse, competitive innovation ecosystem than from regulatory mandates on entrenched monopolists. Mandates matter — but they work best when they operate alongside competitive pressure that makes compliance in the spirit of the regulation commercially rational, not just legally required.

What the Duty of Care Gets Right — And Why It Arrived Too Late

The Australian Digital Duty of Care issues paper is, on its own terms, a well-designed framework. It is worth being clear about what it gets right, because the argument here is not that the Duty of Care is wrong. It is that it arrived too late, in the wrong sequence, into an environment that has already foreclosed much of its potential.

It proposes design obligations covering the entire tech stack — including generative AI capabilities embedded in service provision. This is genuinely forward-looking. Generative AI is no longer just a discrete tool that users consciously choose to engage with. It is disappearing into the infrastructure of everyday digital experience — embedded in recommendation systems, content generation, conversational interfaces, image manipulation, synthetic social interaction. The harm is becoming invisible precisely as it becomes more pervasive. A regulatory framework that covers AI as it is actually deployed, rather than as a separate product category, is the only framework that can keep pace with that technological shift.

It proposes penalties of the greater of 5% of global annual turnover or $50 million — proportionate, not performative. For Meta, 5% of global turnover would be in USD billions. That changes the market calculation in a way that the ban’s AUD49.5 million flat cap never could.

It includes researcher data access, independent audit powers, transparency requirements, and executive accountability. These are the instruments of ongoing accountability rather than one-time compliance. They create the evidence base that regulatory decisions require and the governance structure that makes accountability real rather than performative.

This is, essentially, what Australia should have passed instead of the ban. It is what Zoe Daniel’s Digital Duty of Care Bill introduced on 25 November 2024 — four days after the social media ban was tabled, lapsing when Daniel lost her seat in the federal election. The right framework existed. The wrong instrument passed instead.

But the Duty of Care is still only an issues paper. Not legislation. Not law. Pre-consultation, with no timetable for introduction, no guarantee of passage, and a 12-month commencement period after passage. It will not be operational before 2028 — into a regulatory environment where the surveillance architecture will have had three or four years of commercial entrenchment, where the age assurance industry’s trusted provider lists will have defined what compliance looks like, and where the market foreclosure of smaller competitors will have narrowed the innovation ecosystem that the Duty of Care depends on to work.

The right framework. The wrong sequence. And by the time it arrives, the pig will be so thoroughly established that the lipstick is all that’s visible.

Toward a Caring Digital Environment: What the Theory of Change Actually Looks Like

The alternative starts with a different question. Not “how do we stop harm” — a defensive, prohibitionist frame that produces bans and verification infrastructure. But “how do we cultivate environments that lean toward care” — a constructive frame that produces design obligations, competitive innovation, and genuine safety.

A caring orientation in platform design means: recommendation systems that notice when a user is in distress and surface support rather than amplifying distress content. Interfaces that create natural stopping points rather than eliminating them. Social feedback mechanisms that may reinforce connection and mutual support rather than performance and comparison. Defaults that create safe conditions rather than expose. Design that treats users as people with complex needs rather than attention units to be harvested. GenAI capabilities that are designed to support rather than exploit the people they interact with. Architecture that serves the user’s actual interests rather than the platform’s engagement metrics.

This is achievable. Elements of it already exist. The question is whether regulation mandates it as the default or leaves it as an optional add-on to engagement-maximising architecture.

The coherent theory of change — the one that actually delivers what we said we wanted — follows this sequence:

Enforce existing obligations first. Platforms already prohibit under-13s. Make them prove it, with turnover-linked penalties for failure. The EU’s DSA enforcement is already doing this. Start where the law already is.

Design obligations with proportionate penalties. Risk assessments of harmful features, required mitigation, mandatory transparency, researcher data access, audit powers, executive accountability. Article 28 of the DSA with teeth. Financial penalties that make the harmful architecture more expensive than the safe one.

Protect the innovation ecosystem. Proportionate requirements for smaller platforms. Safe harbours for open-source, federated, and community-governed architectures. Active support for alternatives that don’t rely on engagement maximisation. The competitive pressure that makes market incentives work alongside regulatory mandates.

Age-appropriate spaces by design — not by identity. Default-safe architecture for younger users that adapts to developmental needs without requiring biometric data or government identity documents. Opt-in to higher-risk features rather than opt-out of safety. Design that serves the whole arc of young users’ digital lives.

Graduated access rather than cliff edges. If age-differentiated access to specific features is warranted, implement it gradually with digital literacy scaffolding, parental engagement, and design safeguards. No binary exclusion followed by unrestricted access at an arbitrary threshold.

Children’s voices throughout. The UN Convention on the Rights of the Child gives children the right to be heard in decisions that affect them. That right was not honoured in Australia’s ban. It must be built into any regulatory process that claims to act in children’s interests.

International coordination. Design obligations without international coordination are local interventions in a global architecture. Harmonised standards, mutual recognition of regulatory findings, and coordinated enforcement against platforms that arbitrage regulatory differences are prerequisites for regulation that actually changes global market logic rather than just shifting harm between jurisdictions.

This sequence puts design obligation first, surveillance infrastructure never, competitive innovation throughout, and children’s voices in the room from the beginning.

What Europe and the UK Can Still Do

Europe is not Australia. It has better foundational regulatory architecture, stronger privacy law, and a procedural framework — the DSA’s notification requirement — that is actively slowing the race of national ban legislation while the Commission builds harmonised alternatives.

Article 28 of the DSA already exists. It requires design-based safety obligations. It explicitly says compliance does not require processing additional personal data to identify minors. The EU Kids Online network — 29,169 children across 19 European countries — has told European policymakers to implement it. The Digital Fairness Act, expected Q4 2026, can extend design harm obligations with proportionate penalties and cover the emerging architecture of generative AI harm.

But Europe is not immune to the same political dynamics. France has passed its ban through the National Assembly. Germany’s governing coalition is calling for an under-14 ban. The age verification industry is positioning for the European market. The EUDI Wallet is being deployed. The trusted provider lists are being established.

The window closes when national bans become entrenched political commitments. When age verification industry codes are written into DSA compliance frameworks. When first mover entrenchment forecloses the design-based alternative. When the competitive innovation ecosystem is locked out by compliance infrastructure it cannot afford.

Once age bans pass, they cannot be repealed. Australia’s ban will stay on the books while the evidence continues to show it isn’t working, while the Duty of Care is quietly developed around it, and while the surveillance architecture it generated becomes the default condition of Australian digital life. No government repeals a signature child protection measure. The political ratchet only goes one way.

The lesson is not that child online safety doesn’t matter. It matters enormously. The lesson is that the instrument chosen determines what kind of safety is built — and what kind of digital future everyone inherits. An internet that leans toward care is achievable. It requires design obligations, proportionate penalties, competitive innovation, international coordination, and children’s voices in the room. It does not require surveillance infrastructure, biometric data, identity verification at every layer of the stack, or the foreclosure of the competitive ecosystem that could build the alternatives we need.

Australia chose the instrument that was easier to communicate. Europe still has the chance to choose the one that works.

But the window is open, not indefinitely. And the pig is already being prepared for its close-up.

Updated 9 June 2026: Legislative timeline corrected, currency notations clarified, and primary source links added throughout